If you only have a minute, here's what you need to know.

- Most AI roadmaps are technology wish lists. They catalog tools the organization wants to acquire, not business problems it needs to solve. That is why they do not survive the next budget review. They cannot answer the question every CFO eventually asks: "Which of these initiatives is moving our top-line or protecting our margin?"

- Strategic alignment is the second multiplier dimension in the AI Readiness Scorecard. It carries 1.5x weight because clear strategic direction amplifies every dollar invested in data, talent, and technology. Without it, even well-funded AI programs produce impressive demos that never reach production.

- Alignment means two things: every AI initiative traces a direct line to one of the company's top three to five business priorities, and a use case prioritization framework exists to force trade-offs when every team wants AI resources.

- The data is consistent across every major study: organizations that extract real value from AI invest 70% of their resources in people and process change, 20% in technology and data, and 10% in algorithms. Most organizations invert this entirely.

- This article gives you the discipline framework for building a strategic AI roadmap that does not collapse under pressure, and the 90-day test to determine whether yours is actually aligned.

In the previous article, I covered the five executive sponsorship anti-patterns that explain why most AI initiatives lose their air cover within six months. This article turns to the second multiplier dimension: Strategic Alignment.

If Leadership Commitment is about whether someone is in the room willing to fight for AI, Strategic Alignment is about whether that fight is worth having. A committed sponsor advancing a strategy that is not connected to business outcomes is expensive enthusiasm. It spends the political capital and budget that a real transformation would need.

The technology wish list problem

Here is the pattern. A leadership team approves an "AI roadmap." The document lists 25 to 40 use cases across every function. Copilot for HR. AI-assisted contract review for Legal. Predictive maintenance for Operations. A customer service chatbot. An internal knowledge base. Code generation for Engineering.

The list looks impressive. It demonstrates organizational breadth and signals seriousness about AI. Then the first budget review arrives. The CFO asks which of these initiatives is expected to contribute to revenue or reduce costs by how much, on what timeline, with what assumptions. Nobody has a clear answer. The roadmap gets halved. Six months later it gets halved again.

This is the strategic alignment failure mode, and it is the most expensive one because it burns credibility in addition to budget. When AI initiatives fail to survive scrutiny, leadership draws the wrong lesson: that AI is not ready. The correct lesson is that the AI strategy was never connected to anything that mattered.

McKinsey's research puts it plainly: only 25% of organizations capture significant value from AI. The differentiator between that 25% and everyone else is not model quality, platform selection, or engineering talent. It is the presence or absence of a clear strategic narrative connecting AI investment to business outcomes.

What alignment actually means

Strategic alignment is not about having a vision statement that mentions AI. Two things have to be working simultaneously.

Connecting AI to the company's top priorities

Your organization has three to five things it genuinely has to get right in the next 18 to 24 months. Not aspirations. Imperatives. For a retailer it might be inventory efficiency and shrink reduction. For a bank it might be fraud loss ratios and digital acquisition cost. For a professional services firm it might be billable utilization and proposal win rate. These are the metrics that drive earnings calls and performance reviews.

Every AI initiative that survives should trace a direct line to one of those priorities. Not a stretched, six-degrees-of-separation line. A direct one. If your CEO's stated priority is 200 basis points of margin expansion in North American operations and your AI roadmap leads with a customer service chatbot for EMEA, those do not connect. The chatbot might be a good idea. It is not a strategic priority.

The test is simple: can you write a single sentence explaining exactly which top-three business priority this initiative advances, by what measure, and on what timeline? If you cannot write that sentence in 60 seconds, the initiative is not strategically aligned. It is strategically adjacent, which is another way of saying it is at risk the moment the budget gets tight.

A use case prioritization framework

The second half of alignment is having a framework for saying no. Every team with access to AI tools will generate ideas, and most of those ideas will be reasonable. Without a prioritization mechanism, you end up with the cheerleader sponsor problem at scale: 30 approved pilots, none resourced enough to reach production.

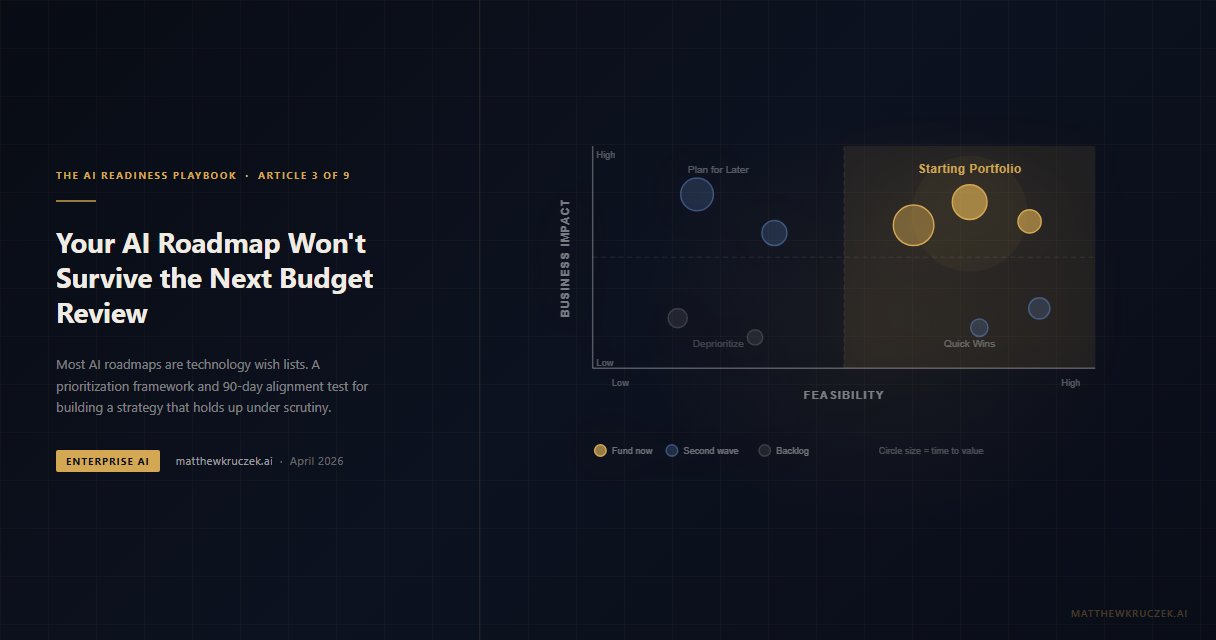

The framework I have seen work consistently evaluates each use case across three dimensions:

Business impact: what is the quantified value if this reaches production? Tie it to a real metric: revenue, cost reduction, cycle time, risk exposure. Avoid "productivity improvements" that cannot be measured against a baseline. A use case that "saves time" is not a business case.

Feasibility: what does this actually require to succeed? Data availability, integration complexity, change management scope, regulatory exposure. Every use case looks feasible in a slide deck. Feasibility scoring forces honest conversations about what is actually ready.

Time to value: how long from investment to measurable outcome? The sweet spot for first initiatives is high impact, achievable in under 12 months. Long-horizon initiatives belong in a second wave, after you have proven the model works and built organizational trust in AI outcomes.

Score each use case on all three dimensions. Plot them. The top-right quadrant (high impact, high feasibility, near-term value) is your starting portfolio. Everything else is a backlog with clear criteria for when it moves forward.

Building this framework is itself valuable. It forces the executive team to align on what "impact" means in their context. It surfaces disagreements about feasibility that would otherwise emerge as project failures. And it creates a defensible record of how decisions were made, which matters when the CFO asks why you funded these five initiatives and not the other twenty.

Why the 70-20-10 split matters

I wrote about this in The AI Adoption Paradox: organizations that actually extract value from AI invest 70% of their resources in people and process change, 20% in technology and data, and 10% in algorithms. Most organizations invert this entirely. They spend 60-70% on platforms and infrastructure, 20-30% on data work, and 10% or less on the organizational change that determines whether anyone uses what they built.

This is not an argument against technology investment. A solid platform is necessary. It is an argument about sequencing and proportion. The technology is genuinely the easy part. Anyone with a cloud account has access to world-class AI models today. The competitive advantage is not access to GPT-4 or Claude or Gemini. It is the organizational capability to deploy those models against real business problems and sustain adoption through the friction that always follows.

Strategic alignment is the mechanism that ensures your 70% gets spent on the right problems, in the right sequence, with outcomes defined before the project starts.

The budget review test

Here is a practical diagnostic. Pull up your current AI roadmap and read each initiative against these four questions:

- Which of the company's stated top-three business priorities does this advance?

- What specific metric will improve, by how much, on what timeline?

- Who is the business owner accountable for that metric?

- What does success look like at 90 days, 6 months, and 12 months?

Any initiative that cannot answer all four questions is not ready for investment. It might be interesting. It might be technically feasible. But it is a bet without defined odds, and you will lose it in the first budget review.

The organizations that survive scrutiny are not the ones with the most sophisticated AI infrastructure. They are the ones whose AI leaders can sit across from a CFO and say: "We are running five initiatives. Each one connects directly to a stated business priority. Here are the metrics we committed to. Here is where we are against them." That conversation is fundamentally different from "we have 40 pilots underway and adoption is growing."

What JPMorgan and Walmart did differently

Two of the most-cited enterprise AI success stories of the past two years share a structural characteristic: they did not build AI strategies. They built strategies that AI served.

JPMorgan did not set out to "adopt AI broadly." They set out to make investment bankers more productive on pitch materials, reduce engineer time on boilerplate code, and improve call center resolution rates. AI was the implementation path, not the goal. Their LLM Suite was built to serve those defined outcomes. The metrics they track (deck creation time, engineering productivity, call resolution rates) were defined before the platform was built, not after.

Walmart's self-healing inventory system did not emerge from an AI experimentation program. It was the answer to a specific business problem: sales growing faster than inventory could follow, perishables waste eating margin, out-of-stock incidents damaging customer experience. The "Wally" agent was not a technology project. It was a supply chain project that required agentic AI to solve. That distinction matters enormously to how the business funded, staffed, and measured it.

Both organizations followed the same principle: define the business outcome first, then determine what AI capability it requires. The organizations that fail do the opposite. They acquire AI capability, then search for problems it can solve.

The 30-use-case trap

One more pattern worth naming. I regularly see organizations with 25 to 40 active AI initiatives. On its face this looks like momentum. In practice it is a sign that nobody is making trade-off decisions.

Every initiative on that list has a sponsor inside a business unit who believes it is important. Every initiative is consuming engineering attention, data team time, and platform resources. And because none of them are resourced enough to reach production quickly, all of them are stuck in the same place: impressive demo, uncertain path to scale.

The right number of active AI initiatives for a mid-size enterprise is not 30. It is five to eight, fully resourced, with named owners and committed timelines. The rest belong in a backlog with explicit criteria for graduation. Concentrating resources produces production-grade outcomes. Spreading them produces perpetual pilots.

The hardest conversation in AI strategy is not "should we do this?" It is "should we do this instead of the seven other things we have already approved?" The organizations that have that conversation, regularly and honestly, are the ones scoring above 3.0 on Strategic Alignment. The ones that avoid it are the ones with 40 initiatives and nothing in production.

What to do this week

Score your current portfolio. Pull every active AI initiative into a spreadsheet. Apply the three-dimension framework: business impact, feasibility, time to value. Score each on a 1-5 scale per dimension. Sum the scores. If your bottom half of the portfolio does not fall away cleanly, your scoring criteria are not specific enough.

Map to business priorities. Take your top-five scored initiatives. For each one, write the single sentence that connects it to a stated business priority. If you cannot write that sentence, that initiative needs rework before further investment, not more engineering time.

Find your CFO's language. Book 30 minutes with your CFO or a finance business partner. Ask: "If our AI program produced one outcome this year that you would consider a genuine success, what would that be?" The answer tells you more about strategic alignment than any roadmap exercise. If the AI team's priorities and the CFO's priorities do not overlap, you have an alignment problem that no prioritization framework will fix.

Stop approving new pilots. If you have more than eight active AI initiatives, implement a one-in-one-out policy. Before approving anything new, one existing initiative either reaches production or gets formally cancelled. This constraint forces the honest conversations about priorities that proliferation allows you to avoid.

The scorecard in Article 1 tells you whether your Strategic Alignment dimension is above or below 3.0. Article 2 addressed the sponsorship failures that let misaligned strategies persist. This article gives you the tools to close the gap. The next article in the series moves to Data Readiness, the dimension where 85% of AI projects actually die, long before anyone notices the strategy was the problem.

Matthew Kruczek is Managing Director at EY, leading Microsoft domain initiatives within Digital Engineering. Connect with Matthew on LinkedIn to discuss AI strategic alignment and organizational readiness for your enterprise.

References

- McKinsey. "The State of AI 2025." Only 25% of organizations capture significant value. mckinsey.com

- Kruczek, M. "The AI Adoption Paradox." 70-20-10 investment split; why most organizations invert the ratio. matthewkruczek.ai

- Pertama Partners. "AI Project Failure Statistics 2026." Clear pre-approval metrics: 54% success vs. 12% without.

- McKinsey. "JPMorgan Chase's Derek Waldron on Building an AI-First Bank Culture." October 2025. mckinsey.com

- Walmart. "Retail Rewired Report 2025: Agentic AI at the Heart of Retail Transformation." corporate.walmart.com

- Kruczek, M. "The AI Readiness Scorecard." matthewkruczek.ai

- Kruczek, M. "The AI Executive Sponsor." matthewkruczek.ai

This is Article 3 of 9 in "The AI Readiness Playbook" series, a step-by-step methodology for making your organization AI-ready.