If you only have a minute, here's what you need to know.

- Data readiness is the dimension where 85% of AI projects actually die. Not at the model selection stage, not in the governance review, not at the budget meeting. In the data. Most organizations discover this after they have already spent six months building a platform and hired a team to run it.

- The failure mode is predictable: organizations treat data readiness as a precondition someone else handles before AI work begins. In reality it is a parallel, structured workstream that requires dedicated ownership, a six-month minimum horizon, and executive attention comparable to the AI program itself.

- Data readiness has three sub-dimensions that all have to reach a minimum threshold before AI can produce reliable results: data access (can the right systems reach the right data?), data quality (is that data accurate, complete, and consistent?), and data governance (do the right policies, controls, and accountabilities exist to use it?). Weakness in any one of the three undermines the other two.

- The organizations that accelerate past the data problem are not the ones with cleaner data. They are the ones that treat data as a product, assign a product owner, define quality SLAs, and treat data work as first-class engineering, not a prerequisite that gets thrown over the wall.

- This article gives you the six-month workstream structure, the diagnostic to assess where your data readiness actually stands today, and the specific failure modes that turn a six-month fix into a two-year delay.

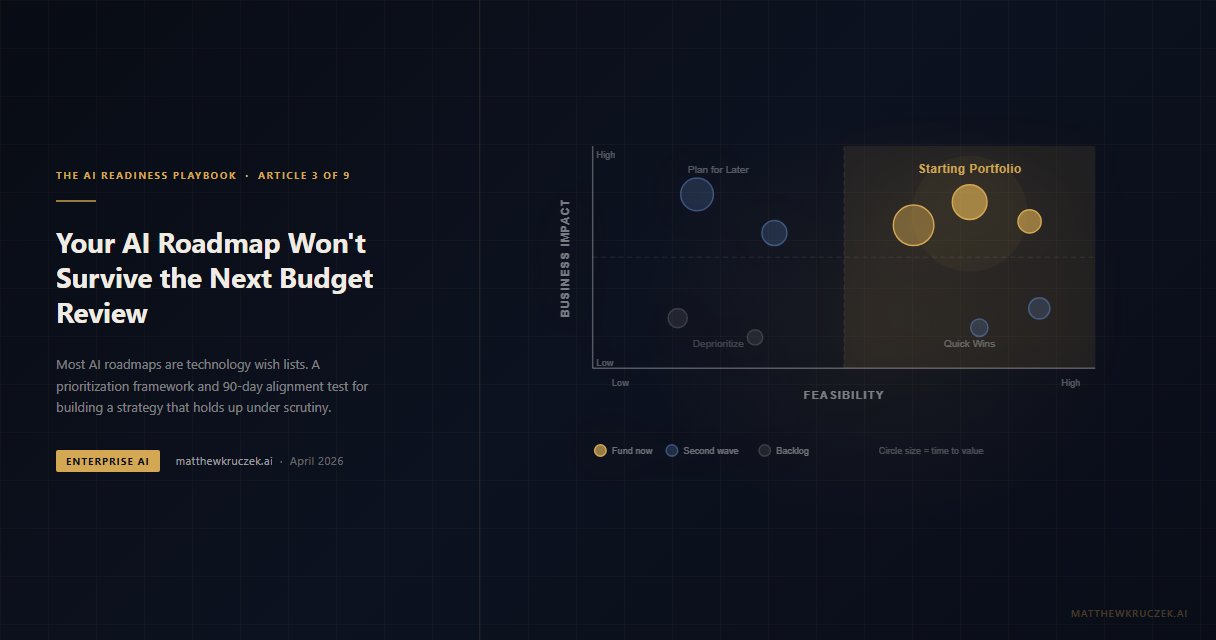

In the previous article, I gave you the framework for building a strategic AI roadmap that survives the first budget review. Strategic alignment determines whether you are solving the right problems. Data readiness determines whether you can solve them at all.

The distinction matters because you can have perfect strategic alignment and still fail completely if your data is not ready. A use case prioritization framework that identifies "predictive customer churn" as your highest-priority initiative produces nothing if the behavioral data that drives churn prediction is fragmented across three CRMs, inconsistently formatted, and six months out of date.

Data readiness is the third dimension of the AI Readiness Scorecard, and it carries the highest failure rate of any dimension. Organizations that score below 3.0 here do not have AI problems. They have data problems that will express themselves as AI failures.

Why 85% of projects die in the data layer

Gartner's research on AI project failure consistently points to data as the primary culprit. Not model architecture. Not compute infrastructure. Not vendor selection. Data.

The specific failure modes cluster into three patterns:

The first is the access problem. The data exists but the AI team cannot reach it. It sits behind system-specific access controls, in on-premises databases that predate API architectures, or in SaaS platforms that require custom export pipelines. The AI team spends months building data plumbing instead of building AI. By the time they have data flowing, the project has consumed most of its timeline without producing a single prediction.

The second is the quality problem. The data is accessible but unreliable. Duplicate customer records. Inconsistent field definitions. Stale snapshots masquerading as current data. Missing values in critical fields. I have seen organizations where the same KPI is calculated differently in five different reporting tools, with no consensus on which number is correct. When your training data contains that kind of noise, your model learns the noise.

The third is the governance problem. The data exists and is accessible and is reasonably clean, but nobody is authorized to use it for AI purposes. PII regulations, data residency requirements, contractual restrictions on secondary use, conflicting interpretations of consent. Legal and compliance reviews that were designed for traditional data warehousing add 90-day delays to each new data source. The AI team waits while lawyers argue about whether a model trained on anonymized transaction data constitutes "processing" under GDPR.

Most organizations face all three problems simultaneously. They discover this after the AI program is already funded and staffed, because nobody ran a data readiness assessment before approving the use cases.

The three sub-dimensions

Data readiness on the scorecard has three components that each require independent assessment. A score of 4.0 on access with a 1.0 on governance does not average to 2.5 in practice. It produces a program that moves fast until it hits a regulatory wall and then stops completely.

Data access

Access is about whether AI systems can reach the data they need, at the frequency they need it, at the latency that makes the output useful.

A 2.0 on data access looks like this: most of the relevant data exists in enterprise systems, but reaching it requires custom integrations built by scarce engineering resources. Each new data source requires a new pipeline. Data is batch-exported on weekly or monthly schedules, which is adequate for backward-looking reporting but useless for real-time inference. There is no data catalog describing what exists, so the AI team spends significant time just discovering what data is available.

A 4.0 on data access looks like this: a centralized data platform serves as the authoritative source for AI consumption. A queryable data catalog describes available datasets with schema, ownership, update frequency, and quality scores. APIs or event streams provide near-real-time access for latency-sensitive use cases. Pipelines are monitored, versioned, and maintained with the same engineering discipline as production software.

The gap between 2.0 and 4.0 is not primarily a technology gap. Modern data platform tooling is mature and accessible. The gap is an organizational one: whether data engineering is treated as infrastructure work that supports AI or as a separate function that AI must negotiate with.

Data quality

Quality is about whether the data is accurate enough to train reliable models and whether it stays that way over time.

The most common quality problem is not bad data. It is unknown data. Organizations lack quality baselines. They cannot tell you what percentage of their customer records have complete contact information, how frequently their inventory data diverges from physical counts, or what the error rate is in their contract metadata. Without a baseline, you cannot assess readiness, and you cannot detect degradation once AI is in production.

A 2.0 on data quality looks like this: quality issues are known anecdotally but not measured systematically. Data engineers fix problems reactively when a downstream process breaks. There are no quality SLAs. Different teams use different versions of the same dataset without knowing it. Data documentation exists for legacy compliance purposes but does not reflect current reality.

A 4.0 on data quality looks like this: quality is measured automatically for every priority dataset. Completeness, accuracy, consistency, timeliness, and uniqueness are tracked against defined thresholds. Quality alerts fire before downstream AI systems are impacted. A data quality owner (not a database administrator, an accountable business owner) signs off on quality SLAs and is accountable for maintaining them.

The 4.0 standard requires treating data quality like a product attribute, not a cleanup task. The organizations that get there fastest are the ones that assign named accountability for data quality at the dataset level and make that accountability visible to leadership.

Data governance

Governance is about whether the organization has the policies, controls, and processes to use data for AI purposes legally, ethically, and at speed.

This is the sub-dimension most organizations underestimate. They conflate governance with compliance, as if the goal is to avoid fines rather than to enable velocity. Good governance is a speed mechanism. It defines what data can be used for what purposes under what conditions, so AI teams do not have to litigate each use case from scratch.

A 2.0 on data governance looks like this: policies exist but were written for traditional data warehousing. Every new AI use case requiring sensitive data triggers a legal review that takes 60 to 90 days. AI teams route around governance by using only data that does not require approval, which systematically excludes the most valuable data. There is no data classification schema, so everything gets treated as maximally sensitive by default.

A 4.0 on data governance looks like this: a data classification framework exists with four to five levels (public, internal, confidential, restricted, regulated), and each level has pre-approved use cases for AI. A governance committee reviews novel data use cases in a standing two-week sprint rather than ad hoc. Privacy-preserving techniques (differential privacy, synthetic data, federated learning) are available as tooling, not just as theoretical options.

The organizations that treat governance as an enabler rather than a gatekeeper are the ones that move fastest. They do not bypass controls. They invest in making the controls faster.

The six-month workstream structure

Six months is the minimum to move data readiness from a 2.0 to a 3.5 across all three sub-dimensions for a focused set of use cases. It is not six months to fix everything. It is six months to achieve production-grade data readiness for the five to eight initiatives in your starting portfolio.

The structure I have seen work organizes the work into six 30-day phases, run in parallel with initial AI development, not as a prerequisite before it starts.

Days 1 to 30: Inventory and discovery. Map every data source relevant to your starting portfolio. For each source, document: what it contains, who owns it, how it is maintained, what its current access mechanism is, and what is known about its quality. This is not a six-month data cataloguing exercise. It is a focused discovery sprint for the data relevant to your first five use cases. Output: a data map with identified owners, access paths, and known quality issues.

Days 31 to 60: Quality baseline. For each priority dataset, run a quality assessment: completeness, accuracy, consistency, timeliness, uniqueness. Do not clean the data yet. Establish the baseline. You need to know what you are working with before you start fixing it. Output: quality score by dataset, gap analysis against minimum thresholds for each use case, prioritized remediation backlog.

Days 61 to 90: Governance framework. Review your use cases against existing governance policies. For each use case, identify the data classification required and whether pre-approved use exists. Draft a classification framework if one does not exist. Engage legal and compliance early with specific use cases rather than abstract AI policy questions. Output: governance decision for each use case (approved, approved with controls, requires review, blocked), and a 90-day backlog for any required reviews.

Days 91 to 120: Pipeline infrastructure. Build the data pipelines that connect priority data sources to the AI development environment. These are not production pipelines yet. They are validated paths from source to consumption. Version them. Document them. Treat them as code. Output: functioning data pipelines for priority use cases, documented schema agreements, integration tests.

Days 121 to 150: Remediation and validation. Address the quality issues identified in the baseline that are blockers for your starting portfolio. Not all quality issues, just the ones that break your use cases. Run end-to-end data validation: does the data flowing through your pipeline produce reliable AI outputs? Output: remediated datasets meeting defined quality thresholds, validated pipeline outputs.

Days 151 to 180: Monitoring and handoff. Instrument quality monitoring for production. Define alerting thresholds. Assign operational ownership. Document the runbook for data quality incidents. Output: a production-ready data layer with monitoring, ownership, and escalation paths.

Six months of parallel execution produces a data foundation that can support an AI program. Six months of sequential work (data first, then AI) is 12 months before you have a production-grade outcome. Run them in parallel.

The data debt trap

Most enterprise organizations carry significant data debt, the accumulated consequence of years of system acquisitions, migrations, and integrations that optimized for getting data somewhere rather than getting it right.

Data debt presents in a specific pattern. Field names that mean different things in different systems ("account," "customer," "client"). Records that were migrated with known quality issues because the migration deadline was more important than the data. APIs that return data formatted for the consuming application that originally requested them, not for general-purpose consumption. Timestamps in 14 different formats across 14 different systems.

The natural reaction is to propose a data cleanup project before AI work begins. This is the wrong sequencing. A comprehensive data cleanup project for an enterprise-scale organization takes two to four years and almost always expands in scope. Organizations that wait for clean data before starting AI work never start AI work.

The correct sequencing is use-case-specific remediation. Clean the data required for your starting portfolio, not all the data. A churn prediction model needs clean behavioral data. It does not need clean procurement data. A contract analysis agent needs clean contract metadata. It does not need a clean HR database. Scoping remediation to use cases keeps the work bounded and maintains momentum.

This is why the prioritization framework from Article 3 matters so much to data readiness. You cannot run use-case-specific remediation without knowing which use cases you are running. Organizations without a prioritization framework end up trying to clean everything simultaneously, which is how a six-month workstream becomes a three-year delay.

Scoring 2.0 versus 4.0

To calibrate where your organization stands, here is what the difference between a 2.0 and a 4.0 looks like in practice:

A 2.0 organization starts an AI project by assigning an engineer to "figure out the data." That engineer spends four to six weeks discovering relevant data sources, negotiating access with system owners, discovering quality problems they were not warned about, and getting blocked by compliance concerns that nobody anticipated. By week eight, they have a working data pipeline for two of the five required data sources and an open legal review on the third. The AI project is already behind, and the AI team has done no AI work yet.

A 4.0 organization starts an AI project with a data readiness assessment against a pre-existing catalog. Within two weeks, they have a clear picture of which data is available, in what quality, under what governance conditions. Pre-approved use cases cover three of the five data sources. A standing governance sprint is already scheduled to review the fourth within 10 days. A data quality owner has been briefed and has committed to a remediation timeline for the fifth. By week eight, the AI team is iterating on models, not waiting for data.

The difference is not luck. It is prior investment in data infrastructure treated as a first-class organizational capability.

What to do this week

Run a data readiness assessment for your starting portfolio. For each of the five to eight use cases in your first portfolio, list the data sources required. For each source, answer: can we access it today? what is the known quality? is it approved for AI use? Score each on a 1-5 scale per sub-dimension. If you cannot score it, you have a discovery problem. A discovery problem is also a data readiness problem.

Identify your data owners. For each priority dataset, name the person accountable for its quality and governance status. Not the system owner. Not the DBA. The business owner who uses that data and depends on it being right. If nobody raises their hand, that dataset has no accountability, and no accountability means no quality improvement.

Get legal and compliance in the room early. Do not wait until you have a specific use case in a governance review. Bring your AI program leads and your legal and compliance teams together for a 60-minute briefing on the use cases in your starting portfolio. Ask: which of these raises a concern? what information do you need from us to resolve it quickly? Organizations that run governance reviews reactively wait 90 days per use case. Organizations that brief early, with specifics, run reviews in two to three weeks.

Treat data pipelines as production software. If your data pipelines are built and maintained differently from your application code, that is a risk. Version them. Test them. Monitor them. Assign ownership. The AI model is only as reliable as the data flowing into it, and a pipeline that breaks silently is worse than a pipeline that fails loudly.

The scorecard in Article 1 gives you a numeric anchor for where your Data Readiness dimension sits today. Article 3 gave you the prioritization framework that makes use-case-specific remediation possible. This article gives you the workstream structure to execute against that framework. The next article in the series turns to the AI Operating Model: whether your organization is structured to support AI centrally, through a hub-and-spoke model, or in a federated way, and how that choice determines the speed and governance quality of everything downstream.

Matthew Kruczek is Managing Director at EY, leading Microsoft domain initiatives within Digital Engineering. Connect with Matthew on LinkedIn to discuss AI data readiness and enterprise AI program design.

References

- Gartner. "85 Percent of AI Projects Fail." Primary cause: data quality and accessibility issues. gartner.com

- Kruczek, M. "The AI Readiness Scorecard." Data Readiness as a 1.5x multiplier dimension. matthewkruczek.ai

- IBM Institute for Business Value. "The Data Differentiator." Poor data quality costs organizations an average of $12.9M annually. ibm.com

- MIT Sloan Management Review. "Seizing the Data Advantage." Only 3% of enterprise data meets basic quality standards across five dimensions. sloanreview.mit.edu

- McKinsey. "The State of AI 2025." Organizations with strong data foundations are 2x more likely to reach AI production. mckinsey.com

- Kruczek, M. "Your AI Roadmap Won't Survive the Next Budget Review." Use-case prioritization as the prerequisite for bounded data remediation. matthewkruczek.ai

This is Article 4 of 9 in "The AI Readiness Playbook" series, a step-by-step methodology for making your organization AI-ready.